Concept

For this assignment, I wanted to explore Autonomous Agents. I took inspiration from Craig Reynolds’ Steering Behaviors, specifically the idea that a vehicle can “perceive” its environment and make its own decisions.

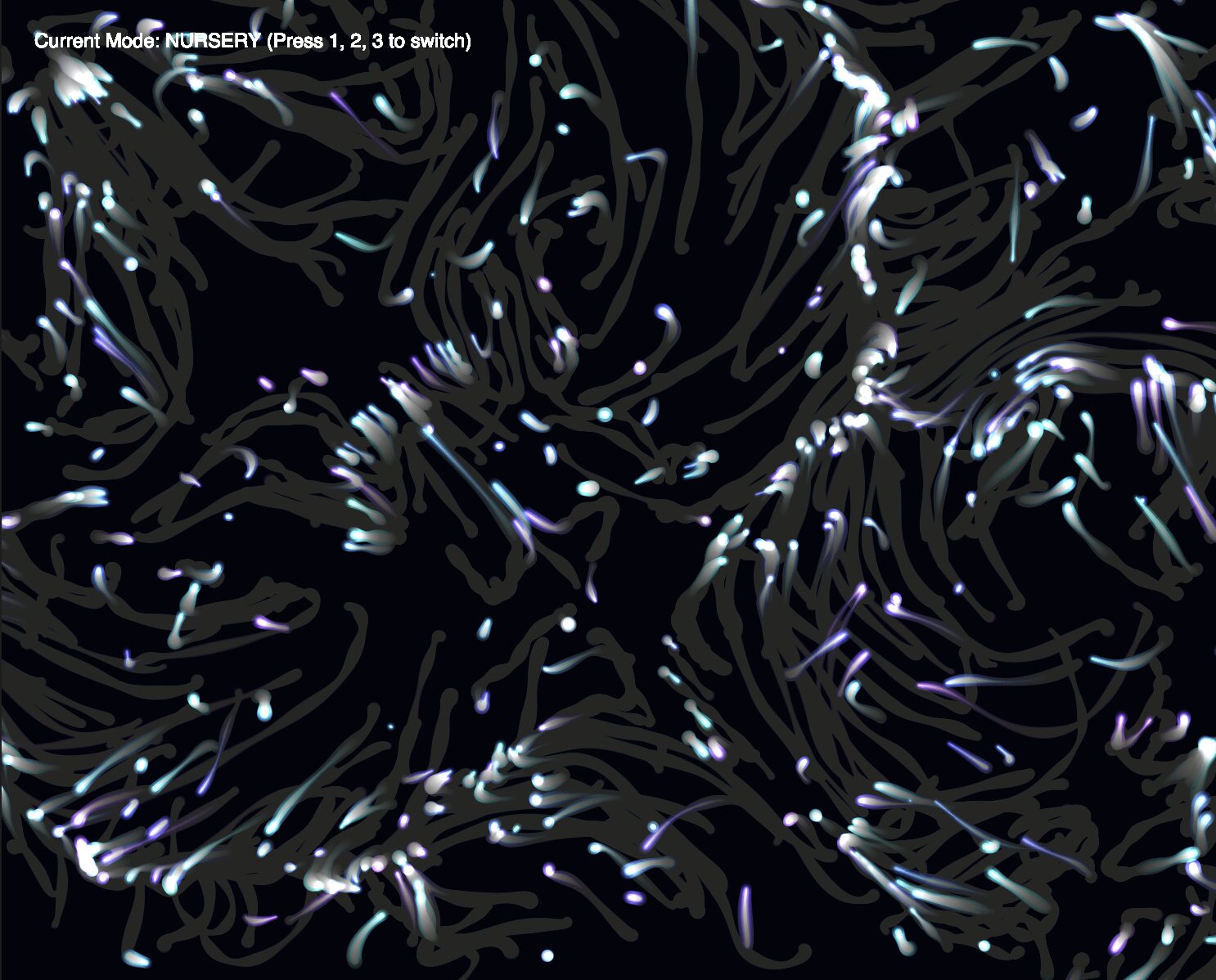

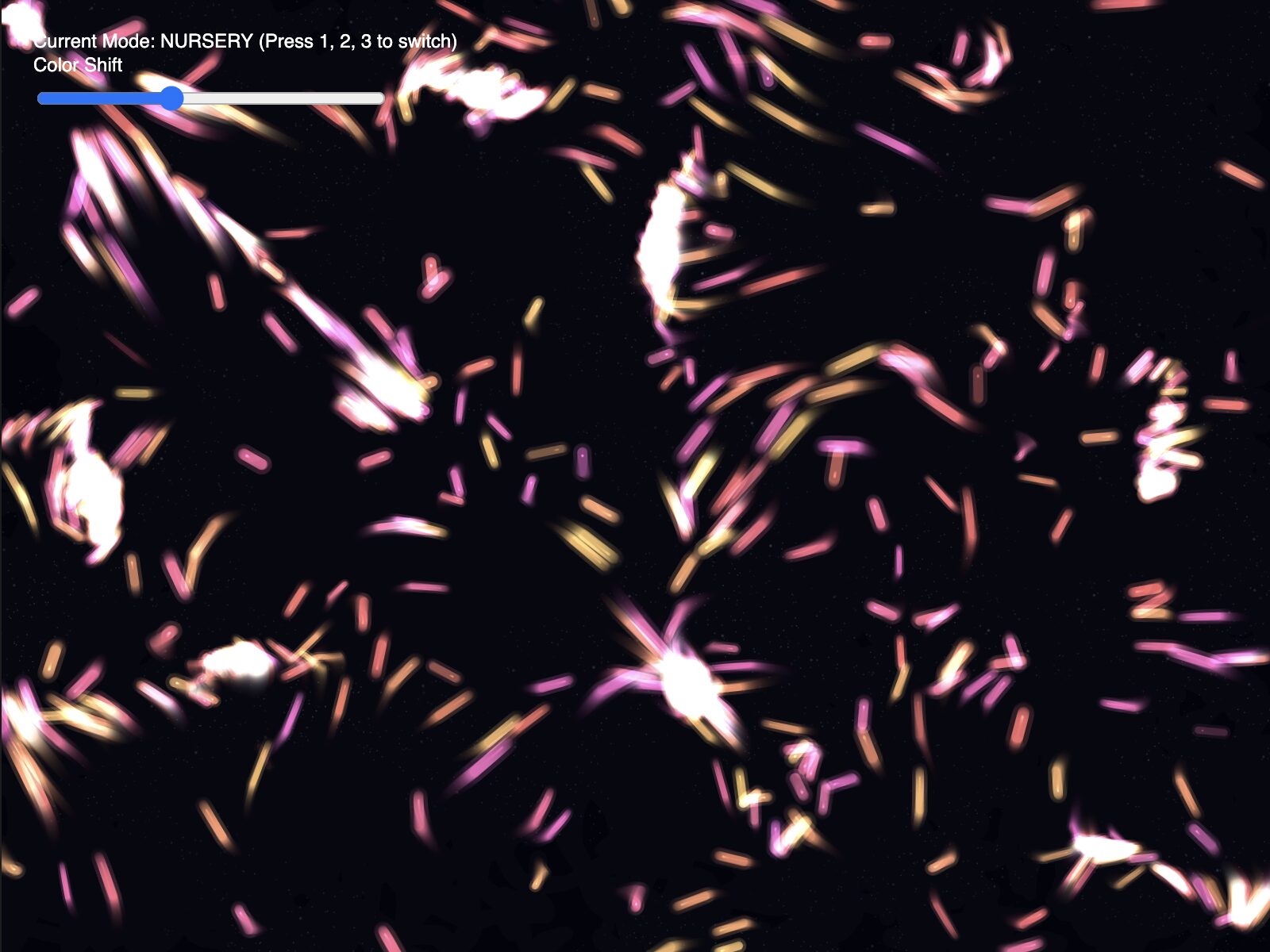

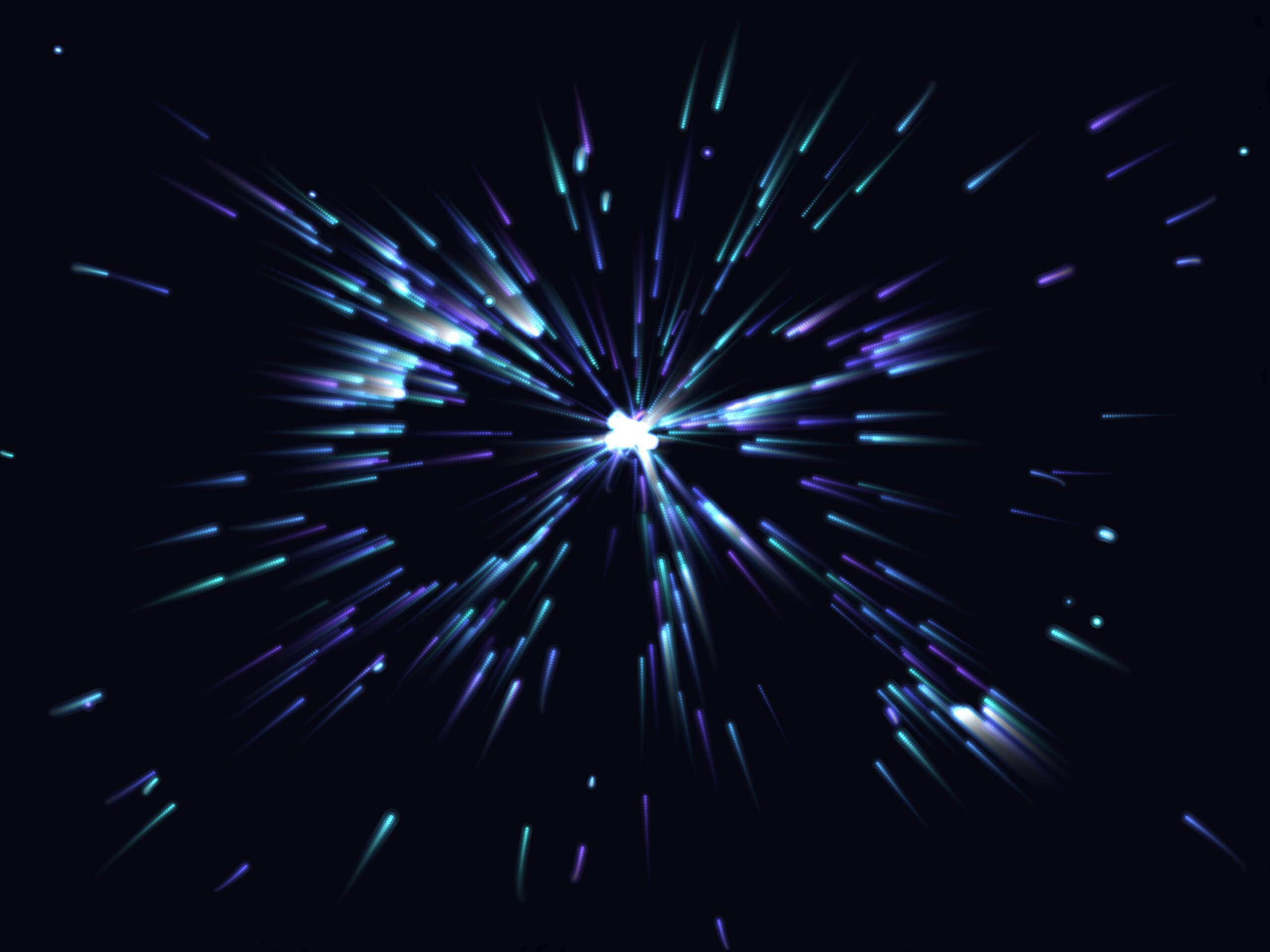

My goal was to create a Living City. I designed a system of commuters or normal vehicles that dutifully follow a circular traffic path, and a Police car (the interceptor) controlled by the mouse. The commuters get out of the police car’s way as it approaches. The project explores the tension between order (Path Following) and chaos (Fleeing from danger).

Sketch

Process and Milestones

I started by building a multi-segment path. The biggest challenge here was the logic required to make the vehicles drive in a continuous loop. I used the Modulo operator (%) in my segment loop so that when a vehicle reaches the final point of the rectangle, its “next target” automatically resets to the first point.

At first, my vehicles were “shaking” as they tried to stay on the path. I realized they were reacting to where they are right now, which is always slightly off the line. I implemented Future Perception—the vehicle now calculates a “predict” vector 25 pixels ahead of its current position. By steering based on where it will be, the movement became much smoother and more life-like.

The most interesting part of the process was coding the transition between behaviors. I wrote a conditional check: if the distance to the Interceptor (mouse) is less than 120 pixels, the Commuter completely abandons its followPath logic and switches to flee. I also increased their maxSpeed during this state to simulate “panic.” Once the danger passes, they naturally drift back toward the road and resume their commute.

Code I’m Proud Of

I am particularly proud of the logic that allows the vehicle to choose the correct path segment. It doesn’t just look at one line; it scans every segment of the “city” to find the closest one, then projects a target slightly ahead of its “normal point” to ensure it keeps moving forward.

// Predictive Path Following logic

followPath(path) {

// look into the future

let predict = this.vel.copy().setMag(25);

let futurePos = p5.Vector.add(this.pos, predict);

let target = null;

let worldRecord = 1000000;

// scan all road segments for the closest point

for (let i = 0; i < path.points.length; i++) {

let a = path.points[i];

let b = path.points[(i + 1) % path.points.length];

let normalPoint = getNormalPoint(futurePos, a, b);

// ... (boundary checks) ...

let distance = p5.Vector.dist(futurePos, normalPoint);

if (distance < worldRecord) {

worldRecord = distance;

// look 15 pixels ahead on the segment to stay in motion

let dir = p5.Vector.sub(b, a).setMag(15);

target = p5.Vector.add(normalPoint, dir);

}

}

// steer only if we've drifted outside the lane

if (worldRecord > path.radius) {

this.seek(target);

}

}

Reflection

This project shifted my perspective on coding movement. In previous assignments, we moved objects by changing their position; here, we’re moving them by changing their desire. It feels much more like biological programming than math.

I also noticed that instead of commuters giving way to the police car, it looks like the cars are racing cars on a track fleeing from the police as it approaches. I’ve left the final interpretation up to the reader’s imagination…

Future Ideas

- I want to add a separation force so that commuters don’t overlap with each other, creating more realistic traffic jams.

- Allowing the user to click and drag the path points in real-time, watching the agents struggle to adapt to the new road layout.

- Integrating the p5.sound library to make the interceptor’s siren get louder as it gets closer to the vehicles (Doppler effect).