Luminous Silence: A Botanical Reverie

Project Overview

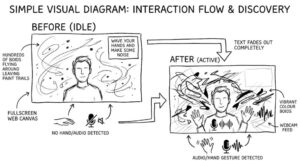

Luminous Silence is an exploration of the emergent, unspoken connections between the human form and the natural world. It envisions the body not as a separate entity from nature, but as a living doorway, a threshold between the visible and the hidden, the silent and the voiced.

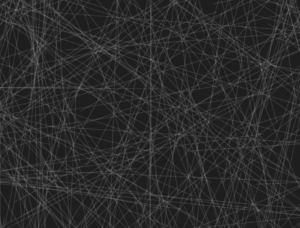

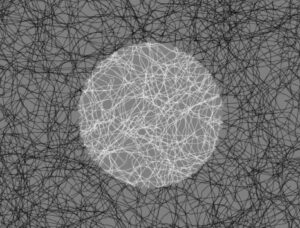

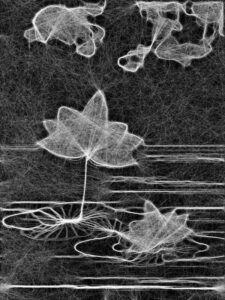

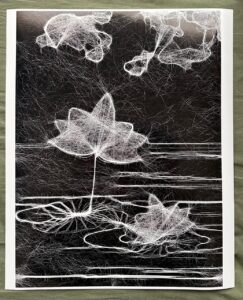

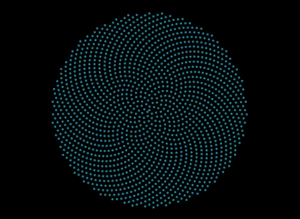

In this interactive ecosystem, your hand becomes a seed. Your breath transforms into weather. The screen before you ceases to be a mere digital display and awakens as a nocturnal garden, blooming and reacting as though it possesses its own quiet intelligence. Rooted in the geometric perfection of phyllotaxis, the spiral growth patterns found in sunflowers and pinecones , the visual landscape presents an initial state of cosmic, botanical order. However, this order is fragile and alive. As you introduce your hand, the spiral is disrupted, awakened, and reconfigured.

By employing principles inspired by cellular automata, the artwork simulates a living organism. Each “seed” or point of light within the spiral governs its own state based on localized, rule-based interactions with its environment. Just as cells in an automaton live, die, or illuminate based on their neighbors, the particles here cascade with bioluminescent light, reacting to the ambient noise of the room and the physical proximity of the viewer. Nothing is fixed. Everything exists in a perpetual state of sensing, dissolving, blooming, and remembering.

This is not a landscape meant to be controlled; it is a listening intelligence. The emotional resonance of the piece lies in its awe, fragility, and mystery, akin to standing beside a dark, restless ocean at night, witnessing the water ignite with bioluminescent plankton only where the surface is disturbed.

Implementation Details & The Creative Process

The journey of building Luminous Silence was an exercise in layering complexities. The goal was to move from static mathematical geometry to a fluid, responsive, and “breathing” system. I chose p5.js for the visual rendering and ml5.js for the machine learning hand-tracking capabilities.

The creative process was divided into distinct evolutionary milestones, ensuring that the gap between raw mathematics and poetic interaction was bridged gradually.

Milestone 1: The Seed (Establishing Cosmic Order)

The first step was to build the foundation of the visual language: the phyllotaxis spiral. I needed to ensure the math was sound before introducing any chaos. This milestone focused purely on calculating the golden angle (137.5 degrees) and plotting the seeds to create the signature sunflower pattern.

Milestone 2: The Breath (Cellular Automata & Audio Reactivity)

Once the spiral was established, it needed life. I introduced audio input to allow the user’s voice and breath to unravel the spiral. Furthermore, I integrated localized rule sets inspired by cellular automata. By utilizing Perlin noise to dictate the phase and size of each particle, the seeds began to pulse organically, as if passing states of light back and forth among neighbors.

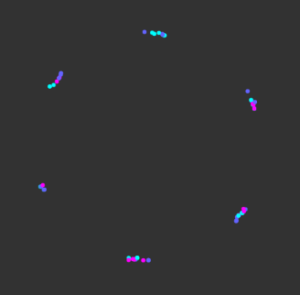

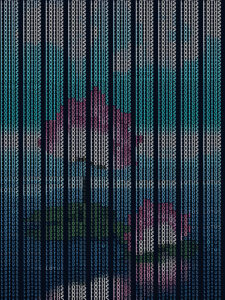

Milestone 3: The Portal (Physical Intersection)

The final milestone before integrating the webcam pixel-sampling was the physical portal. Using ml5.handPose, the system maps the user’s palm. The code calculates the distance between every single seed and the user’s hand. When the hand breaches a certain radius, a localized “burst” or spatial distortion occurs, pushing the seeds away while increasing their brightness, acting as the living doorway between the user and the digital organism.

Video Documentation

The accompanying video documentation captures the intimate choreography between human and machine. It begins in pure darkness, save for the pulsing instruction screen. As the user raises their hand, the video highlights the immediate, fluid transition: the sudden materialization of the botanical galaxy.

Key moments highlighted in the video include:

-

The Awakening: The initial distortion of the spiral as the user’s hand enters the frame, showing the “portal” effect where the hand clears a space among the seeds.

-

The Whisper: The user speaking into the microphone, demonstrating the audio-reactive expansion and color shift (neon mode) of the cellular particles.

-

The Touch: The user clicking the screen to alter the ecosystem’s hue, showing the transition from deep oceanic blues to vibrant, unearthly colors.

Final Sketch

Reflection

Luminous Silence succeeds in stepping away from the paradigm of “technology as a tool” and moves toward “technology as an entity.” The user experience feels profoundly intimate. By obscuring the raw camera feed and only revealing it through the scattered, glowing seeds, users report a feeling of looking into a magic mirror, one that reflects their energy and silhouette rather than their physical details.

The integration of the cellular automata logic,where the glow ripples through the system rather than flashing uniformly , was vital in achieving the feeling of a living organism. It evokes fragility; if the user drops their hand and falls silent, the piece returns to its quiet, resting geometric state, waiting in the dark.

Future Improvements: In future iterations, I would like to expand the sensory inputs. Integrating fluid dynamics could allow the seeds to drift like actual spores in water rather than snapping back to their rigid spiral paths. Furthermore, implementing a multi-user interaction where two hands create overlapping, conflicting cellular rules could beautifully illustrate the tension and harmony of shared ecosystems.

References & Inspirations

Artistic & Conceptual:

-

Bioluminescent Organisms: Deep-sea life and glowing fungi inspired the stark contrast of bright light emerging from absolute darkness.

-

Botanical Geometry: The mathematical precision of sunflower seeds (phyllotaxis) serves as the structural backbone of the piece.

-

Generative Systems: The concept of cellular automata (pioneered by John von Neumann and John Conway) inspired the localized, emergent behavior of the particles.

-

Spiritual Interaction Design: Designing the interaction to feel less like a software interface and more like a meditative invocation or a digital shrine.

Technical Resources:

-

p5.js Library: For canvas manipulation, noise generation, and audio input processing.

-

ml5.js Library: Utilizing the pre-trained

handPosemodel for real-time skeletal tracking. -

The Nature of Code by Daniel Shiffman – Specifically the chapters covering autonomous agents, noise, and cellular automata.