Project Overview

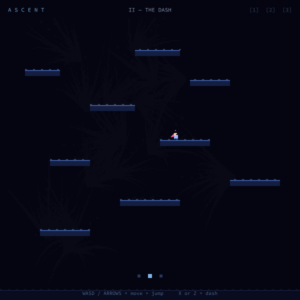

I wanted to make something that felt like a real game but was wondering which topics we took in this class can make a nice game. Feeding Frenzy seemed like the perfect fit because the whole thing runs on flocking. The small fish school together for safety using separation, alignment, and cohesion. The predators use a seek force to hunt you. And the player is just another agent in the same system, subject to the same rules about size and proximity.

The core idea is a size hierarchy: you eat what’s smaller than you, you avoid what’s bigger than you. You start as a tiny glowing fish and work your way up through six schools of progressively larger fish. When you’re small the red predators hunt you. When you grow big enough they stop hunting and you can eat them too. The final state is you as the largest thing in the ocean, every group scattering at your approach.

I really liked how the emergent behavior from flocking makes this feel alive in a way that a lot of games don’t. The fishes are not deterministic, which gives the game a taste. They’re actually making decisions every frame based on separation, alignment, cohesion, and whether they sense you as a threat. When you approach a group of fishes and it splits apart around you it looks exactly like real fish behavior, and that’s because the math is the same math.

I also chose to put a proper main screen with three difficulty levels because I wanted the project to feel like a finished game. The three levels (HARD, MEDIUM, EASY) map to different player speeds, and on HARD the player speed is actually so slow that eating anything is almost impossible. Have fun trying to eat any fish before the red ones eat you up xD

How It Works

The whole project builds on three layers that I added one milestone at a time.

The foundation is the flocking system. Every boid in every school runs the same three rules: separate from neighbors that get too close, align with neighbors moving nearby, cohere toward the average position of the group. These three forces balance against each other every frame. When a threat is nearby a fourth force kicks in, a flee force that points directly away from the threat, and its weight overrides the cohesion and alignment so the group scatters instead of holding together. I really like how this means the schooling behavior and the panic behavior are the same system, just with different weights.

The second layer is the player and eating. The player uses a steering force toward whatever direction the keys are pressing, limited by a max speed and a max force. Eating detection is a simple distance check: if the player’s size is at least equal to the boid’s size minus 1, and the distance between them is within the combined radii, the boid gets eaten and the player grows.

The third layer is the tier progression and predators. I defined six fixed schools with sizes stepping from 3 up to 42. The player starts at size 14 and grows with each eat. A progress bar tracks how close the player is to the next tier unlock size. Predators use a seek force toward the player when they’re close enough and the player is still small enough to eat. Once the player reaches 75% of the predator’s size the predator stops hunting and the player can eat it instead.

Code I’m Particularly Proud Of

This is the section of the flee behavior I spent the most time getting right:

applyBehaviors(boids) {

let playerBigger = player.sz > this.sz - 2;

let fleeRad = map(player.sz, 14, 80, player.sz * 7, player.sz * 3.5);

let fleeForce = playerBigger ? this.flee(player.pos, fleeRad) : createVector(0, 0);

this.fleeing = fleeForce.mag() > 0.01;

let sep = this.separate(boids);

let ali = this.align(boids);

let coh = this.cohere(boids);

sep.mult(1.8);

ali.mult(this.fleeing ? 0.4 : 1.2);

coh.mult(this.fleeing ? 0.25 : 1.0);

fleeForce.mult(4.2);

...

}

The part I like is the fleeRad calculation. When the player is small, the flee radius is player.sz * 7 so fish start scattering from a good distance away. As the player grows the radius shrinks proportionally down to player.sz * 3.5. This means large fish don’t scatter from across the canvas, they only react when you’re actually close to them. Without this, once I was big the entire canvas would clear every time I moved anywhere, which made the late game unplayable. Shrinking the radius as you grow is what gives the final stages their completely different feel compared to the early ones.

Building It Up: Milestones & Challenges

Milestone 1: Flocking School with Mouse Threat

I started by just getting one school of fish working with the three flocking rules, using the mouse as the threat instead of a player fish. No eating, no game loop, just the behavior. I wanted to understand what the flee force actually looks like before adding anything on top of it.

This turned out to be more useful than I expected as a first step. I could just move the mouse around and watch how the group responded without any other variables in play. What I noticed immediately is that the school feels much more alive when it’s fleeing than when it’s just flocking normally. The tighter cohesion during calm states versus the complete scatter during panic is exactly the visual I wanted for the final game. I also tuned the flee force weight here. My first value was 2.0 and it barely looked like fleeing. Going to 4.2 made the scatter feel panicked and fast, which is what I wanted.

Milestone 2: Player Fish + Eating

Once the school behavior felt right I replaced the mouse threat with an actual player fish that I could steer with arrow keys. I added the eating detection and the size growth system. Still just one group at this point and no predators.

The most annoying thing to get right here was the eating radius. My first version used player.sz as the hit radius which sounds logical but in practice felt terrible because the visual glow extends well beyond the raw sz value and you’d visually overlap a fish but not eat it. I changed the check to player.sz * 1.1 + boid.sz * 0.9 which accounts for both bodies, and that immediately felt right. You eat a fish roughly when the visual bodies overlap.

I also added the trail system at this milestone. The trails were genuinely the most visually satisfying addition in the whole project. Without trails the fish feel like sprites moving on a flat screen. With trails the canvas feels like water that things are moving through. The teal-to-gold color shift on the player trail as they grow was something I added just to see what it looked like and I ended up loving it.

Milestone 3: Tiers, Predators, Full Ocean

I expanded from one school to six schools at fixed sizes, added the tier unlock progression, and added two predator fish that hunt the player when they’re small. I also did the full ocean visuals here: the persistent dark background with plankton particles drifting on a slow current, caustic light shafts from the surface, and bioluminescent color trails for every fish.

The predator logic was simpler to write than I expected because it’s just the same seek force the vehicles used in the steering assignment. The predator seeks the player when the player is small enough. The only new thing was adding the condition to stop seeking when the player grows large enough, and then adding the reverse check so the player can eat the predator when they’re big enough. The whole predator system is about 8 lines.

What took more time was the tier progression. My first version just checked if the player was bigger than a boid before allowing an eat, which worked but meant there was no sense of stages or levels. Defining the TIER_UNLOCK array of target sizes and showing the progress bar toward the next target immediately made the game feel structured. You always know what you’re working toward.

Challenge: Fish Running Away Too Fast at Large Sizes

The hardest gameplay problem I hit was in the late game. When the player grows large, the flee radius scales up with player.sz * 7, which means at size 50 the flee radius is 350px. Every school on the visible canvas scatters the moment I appear anywhere near them. I couldn’t catch anything.

The fix was the scaling flee radius I described earlier: map(player.sz, 14, 80, player.sz * 7, player.sz * 3.5). At large sizes the multiplier drops from 7 to 3.5, halving the effective detection range. Schools don’t react until I’m actually close to them. This changed the late game from frustrating to actually interesting, because at large sizes you have to carefully approach schools rather than just running into them.

I also had to tune the eating condition a few times. The original version was player.sz > boid.sz + 2 which meant the player had to be 2 units bigger. Combined with the fact that boids flee when the player is near their size, this created a window where the player was close enough to trigger the flee behavior but not close enough to actually eat them. I changed the condition to player.sz > boid.sz - 1 so the player can eat fish that are nearly the same size, which removed that frustrating gap.

Final Result & Video Documentation

Reflection

What I find most satisfying about this project is that everything interesting in it comes from the flocking system I started with in Milestone 1. The panic scatter when I approach a group of fishes, the way they reforms after I move away, the way the predator tracks me by adjusting direction every frame, the way eating feels physically right because the distances involved match the visual sizes of the fish. All of that is just separation, alignment, cohesion, seek, and flee, balanced against each other with different weights.

I also think the difficulty system is more interesting than it might look. On HARD the player speed is 2.2 which is barely faster than the boid maxSpeed of 2.8, so catching anything requires either cutting them off or cornering them against an edge. On EASY the player speed is 9.5 which makes you feel invincible. The game is the same, the behavior is the same, but a speed difference of less than 10 units changes the entire feel of the experience.

What I’d add next:

- Sound: the eat burst should have a small pop or crunch and the predator should have an ambient low tone that gets louder when it’s close

- Power-ups: a speed boost pickup that floats in the current, giving the player a brief burst of extra speed to catch a school that’s scattering away

- More predator behavior: right now predators just seek. Adding a subtle cohesion force between predators would make them loosely coordinate, which would look spectacular and make the early game more tense

The most satisfying moment in the whole project was in milestone 3 when I didn’t have a win state implemented yet. Each time you play it you can go as big as you want until the sketch starts glitching.

References

- Daniel Shiffman, The Nature of Code — flocking rules, seek/flee steering,

applyForce/updatepattern - Feeding Frenzy (2004 game by SpryFox/BigFishGames) — core size hierarchy concept

- Course material on flocking, steering behaviors, and forces

- AI Disclosure: Claude (Anthropic) assisted in idea forming, speed adjusting, coloring, and debugging.