As crazy as it sounds, a big inspiration of this sketch is a song by a not very well known band called fairtrade narcotics. Especially the part that starts around 4:10 , as well this video: Instagram

To toggle modes, press space to change to Galaxy and press Enter to change to Membrane

GALACTIC is an interactive particle system built around a single mechanic: pressure. Pressure from the mouse, pressure of formation, and pressure of, holding it together. This sketch is built around the state of mind I had when I first discovered the song in 2022. I played it on repeat during very dark times and it was mending my soul. After every successful moment I had at that time, during my college application period, I would play that specific part of the song and it would lift me to galactic levels. The sketch has 3 modes, the charging modes resembles when I put in a lot of effort into something and eventually it works out which is resembled by the explosion. The second state is illustrates discipline by forming the particles into a galaxy. The last is Membrane which represents warmth and support from all my loved ones.

Before writing a single line, I sketched the architecture on paper. The system has three major layers of responsibility: the global state (are we holding? are we exploding? what’s the charge level?), the particle population (a pool of objects that each manage their own physics), and the vfx (trails, embers, glow pulses, which are short-lived visual elements that don’t need the full particle class). I accumulated my notes and compiled them into a beautiful psuedocode that I can follow, this is me abusing what I learned taking Data Structures and honestly desgining the system beforehand works for me really well

please check the pdf for the psuedcode because there’s this ANNOYING issue that no matter whats the scale of the screenshot I am uploading its always so blurry and small.

I also want to disclose that AI helped me with many mathematical sections within this sketch, I wouldn’t be able to understand the math or get around it on my own I think. But I promise my usage is not excessive or dependent and I actually use it to learn haha

Anyway, I started writing attributes for the particle class and oh boy they added up QUICKLY. Here’s a snippet. I tried assigning random values manually but it was very very hard to find the sweet spot for everything so I used some help from AI to assign the proper values to those attributes and I tweaked them a little bit and got really good results.

class Particle {

constructor(x, y) {

this.x = x;

this.y = y;

this.vx = 0; this.vy = 0;

this.nox = random(10000);

this.noy = random(10000);

this.ns = random(0.0015, 0.004);

this.driftSpd = random(0.5, 1.2);

this.baseSize = random(1.8, 4);

this.size = this.baseSize;

this.baseHue = random(228, 288);

this.hue = this.baseHue;

this.sat = random(55, 85);

this.bri = random(75, 100);

this.alpha = random(40, 70);

this.maxAlpha = this.alpha;

this.life = 1;

this.dead = false;

this.wobAmp = random(0.3, 0.9);

this.wobFreq = random(2, 4.5);

this.orbSpd = random(0.015, 0.04) * (random() > 0.5 ? 1 : -1);

this.drag = random(0.93, 0.97);

this.explSpd = random(0.6, 1.4);

this.rotDrift = random(-0.35, 0.35);

this.absorbed = false;

this.trailTimer = 0;

this.suctionTrailTimer = 0;

this.behavior = BEHAVE_RADIAL;

this.spiralDir = random() > 0.5 ? 1 : -1;

this.spiralTight = random(0.03, 0.09);

this.boomerangTimer = 0;

this.boomerangPeak = random(0.3, 0.5);

this.flutterFreqX = random(5, 12);

this.flutterFreqY = random(5, 12);

this.flutterAmp = random(2, 6);

this.cometTrailRate = 0;

this.explodeOriginX = 0;

this.explodeOriginY = 0;

}

A useful frame for interactive generative art is the state machine. This sketch has three primary states that produce visually distinct experiences, and the transitions between them are where most of the design work happened.

Idle state: No mouse interaction. 80 particles drift across the canvas on Perlin noise. Each particle has its own noise offset, frequency, and speed. The result is slow, organic, slightly hypnotic. The palette sits in the 228-288 HSB hue range (blue through violet) and particles breathe gently at a rate of 2 cycles per second. This is the sketch’s resting face, and it needs to be beautiful enough to watch on its own.

Charging state: Mouse held. New particles spawn at the edge of the screen and get pulled toward the cursor which acts as an attractor. Spawn rate accelerates from 1/frame to 18/frame as charge approaches maximum. The vortex arms appear past 8% charge: three logarithmic spirals that rotate faster as charge builds, drawn with beginShape()/vertex() and per-vertex stroke colors that fade toward the outer edge. The glow orb grows around the cursor. Screen rumble starts at 60% charge. Particles near the cursor compress and brighten. The hue of nearby particles shifts toward 305, a hot magenta-violet. Every visual element does the same narrative work: energy is accumulating.

Explosion state: Mouse released. This is tiered across four discrete levels (0 through 3) based on charge thresholds at 0.25, 0.55, and 0.85. Tier 0 is a gentle push. Tier 3 is a white-flash, screen-shake, 800-pixel-radius detonation that spawns up to 70 child particles from split candidates nearest the blast origin. Each particle in blast range gets a force vector calculated from distance falloff (pow(1 - d/blastRadius, 2)), a random rotation drift, and a behavior assignment weighted by proximity to center and charge level. The explosion duration scales with charge, from 1.4 seconds to 4 seconds. The slowdown at high charge gives full-tier explosions a cinematic quality: the cloud expands, holds, then dissipates.

The variation space this produces is wide. A quick series of light taps creates a dotted constellation. Holding in one place while moving slightly creates smeared, comet-like trails. A patient full charge, held long enough to feel the rumble, produces a different kind of satisfaction.

the scariest things about this project were two things braided together: performance under additive blending with 700+ particles, and making the multi-behavior explosion feel coherent rather than random.

Additive blending (blendMode(ADD)) is visually spectacular. Overlapping particles bloom into white rather than muddy brown. The cost is real though: each ellipse composites against everything underneath it. With three ellipses per particle (the outer glow halo, the mid-glow body, and the bright core), plus trail objects, plus embers, a naive implementation at 700 particles hits framerate problems fast. The risk was a beautiful system running at 20fps. I ran many optimization processes but then I migrated to VS code which was MUCH smoother but I don’t know how smart that is going to be because in the end I’m gonna have to embed the sketch in p5.js web editor so it wouldn’t make sense or a difference that it runs smoothy on my device but its laggy on the website.

For the behavior system, the risk was that five different particle behaviors during explosion would read as a mess of conflicting physics. To test this, I implemented behaviors one at a time and ran the explosion at full charge with only that behavior active, watching whether each produced a readable visual signature. BEHAVE_COMET needed the highest speed and the lowest drag (0.99 vs the standard 0.93-0.97) to produce visible streaks. BEHAVE_BOOMERANG needed the timer offset: if the return force kicked in immediately, particles just wobbled. They needed to actually leave the origin first. BEHAVE_FLUTTER was the most unpredictable and required the dampening multiplier (vx *= 0.985) to prevent runaway acceleration from the oscillating force.

The assignBehavior() method’s probability table weights behavior by charge level and proximity to blast center. Close-in particles at high charge get COMET and SPIRAL; far particles get RADIAL and FLUTTER. This creates a natural visual structure: a dense bright core of fast-moving comets surrounded by a slowly oscillating outer cloud. The explosion has a center and a periphery, which reads as physically plausible even though the physics are entirely invented.

But there comes another problem. I don’t like the black background so I decided to create a galaxy background. I had a rough idea how to make it but I had to do some research.

Guess what, all of those links were useless. I found nothing of help but I didn’t want to give up. so I found this reddit post and I found this page and I follwed the principles and methods in the article to create something cool.

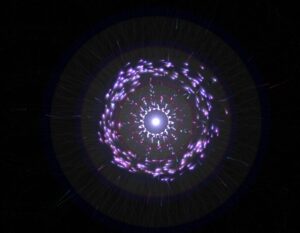

I tried to upload the gif of the animated image but I got this error so I will just upload a screenshot unfortunately.

This is a wall a ran into. No matter what I did with the background, it either looked ugly or became very laggy. So I went with the vibe that space by nature is void and light in it is absent, black is the absence of light. Therefore, the background is black. I feel like a good skill of being an artist is to convince people that your approach makes sense when you’re forced to stick with it.

Then I moved to implementing the galaxy.

The galaxy implementation started with a question I couldn’t immediately answer: how do you turn drifting particles into something that looks like a spiral galaxy without teleporting them there? My first instinct was to assign each particle a slot on a pre-calculated spiral arm and pull it toward that slot. I wrote assignGalaxyTargets(), sorted particles by their angle from center, matched them to sorted target positions, and felt pretty good about it.

function assignGalaxyTargets() {

let n = particles.length;

// build target list at fc = 0 (static, for assignment geometry only)

let targets = [];

for (let j = 0; j < n; j++) {

let gi = j * (GALAXY_TOTAL / n);

let pos = galaxyOuterPos(gi, 0);

targets.push({ gi, x: pos.x, y: pos.y,

ang: atan2(pos.y - galaxyCY, pos.x - galaxyCX) });

}

// sort particles by current angle from galaxy center

let sortedP = particles

.map(p => ({ p, ang: atan2(p.y - galaxyCY, p.x - galaxyCX) }))

.sort((a, b) => a.ang - b.ang);

// sort targets by their angle

targets.sort((a, b) => a.ang - b.ang);

// assign in matched order → minimal travel distance

for (let j = 0; j < n; j++) {

sortedP[j].p.galaxyI = targets[j].gi;

}

galaxyAssigned = true;

}

I lied. It looked awful. Particles on the right side of the canvas were getting assigned to slots on the left and crossing the entire screen to get there. The transition looked like someone had scrambled an egg.

The fix was to delete almost all of that code. Instead of pulling particles toward external target positions, I read each particle’s current position every frame, converted it to polar coordinates relative to the galaxy center, and applied two forces directly: a tangential force that spins it into orbit, and a very weak radial spring that nudges it back if it drifts too far inward or outward. Inner particles orbit faster because the tangential speed coefficient scales inversely with radius. Nobody crosses the canvas. Every particle just starts rotating from wherever it already is.

let tanNX = -rdy / r; let tanNY = rdx / r; let orbSpeed = lerp(1.6, 0.25, constrain(r / GALAXY_R_MAX, 0, 1)) * gt; this.vx += tanNX * orbSpeed * 0.07; this.vy += tanNY * orbSpeed * 0.07;

The glow for galaxy mode uses the same concentric stroke circle method from a reference I found: loop from d=0 to d=width, stroke each circle with brightness mapped from high to zero outward. The alpha uses a power curve so it falls off quickly at the edges. The trick is running galaxyGlowT on a separate lerp from galaxyT. The particles start moving into orbit immediately when you press Space, but the ambient halo breathes in much slower, at 0.0035 per frame vs 0.018 for the particle forces. You get the orbital motion first, then the light catches up.

The galaxy center follows wherever you release the mouse. This is made so the galaxy forms where the explosion happens so the particles wrap around the galaxy center in a much more neat way instead of always having the galaxy in the center.

One line in mouseReleased():

galaxyCX = smoothX; galaxyCY = smoothY;

like honestly look how cool this looks now

The third mode came from a reference sketch by professor Jack that drew 1024 noise-driven circles around a fixed ring. Each circle’s radius came from Perlin noise sampled at a position that loops seamlessly around the ring’s circumference without a visible seam, the 999 + cos(angle)*0.5 trick. The output looks like a breathing cell membrane or a pulsar cross-section.

My first implementation was a direct port: 1024 fixed positions on the ring, circles drawn at each one. It worked but the blob had zero relationship to the particles underneath it. It just floated on top like a decal. Press Enter, blob appears. Press Enter again, blob disappears. The particles had nothing to do with any of it.

The version that actually felt right throws out the fixed ring entirely. Instead of iterating 1024 pre-calculated positions, drawMorphOverlay() iterates over the particle array. Each particle draws one circle centered at its own x, y. The noise seed comes from the particle’s live angle relative to morphCX/CY, so each particle carries a stable but slowly shifting petal radius with it as it moves.

let ang = atan2(p.y - morphCY, p.x - morphCX); let nX = 999 + cos(ang) * 0.5 + cos(lp * TWO_PI) * 0.5; let nY = 999 + sin(ang) * 0.5 + sin(lp * TWO_PI) * 0.5; let r = map(noise(nX, nY, 555), 0, 1, height / 18, height / 2.2);

The rendered circle size scales by mt * p.life * proximity. Proximity is how close the particle sits to the ring. Particles clustered at the ring draw full circles. Particles still traveling inward draw small faint ones. When you activate morph mode, the blob coalesces as particles converge. When you deactivate it, the blob tears apart as particles scatter outward, circles traveling with them. The disintegration happens at the particle level, not as a fading overlay.

The core glow stopped rendering at a fixed point too. It now computes the centroid of all particles within 2x the ring radius and renders there. The glow radius scales by count / particles.length, so a sparse ring is dim and a dense ring is bright. The light follows the mass.

Originally I had Space and Enter both cycling through modes in sequence: bio to galaxy to membrane and back. That made no sense for how I actually wanted to use it. Space now toggles bio and galaxy. Enter toggles bio and membrane. If you’re in galaxy and press Enter, galaxyT starts lerping back to zero while morphT starts lerping toward one simultaneously. The cross-fade between two non-bio modes works automatically because both lerps run every frame regardless of which mode is active.

if (keyCode === 32) {

currentMode = (currentMode === 1) ? 0 : 1;

} else if (keyCode === 13) {

currentMode = (currentMode === 2) ? 0 : 2;

if (currentMode === 2) morphAssigned = false;

}

morphAssigned = false triggers the angle re-sort on the next frame, which maps current particle positions to evenly spaced ring angles in angular order. Same fix as the galaxy crossing problem: sort particles by angle from center, sort targets by angle, zip them in order. Nobody crosses the ring.

The sketch now has three fully functional modes with smooth bidirectional transitions. The galaxy holds its own as a visual. The membrane is the most satisfying of the three to toggle in and out of because the disintegration is legible. You can watch individual particles drag pieces of the blob away as they scatter.

I still haven’t solved the performance question on lower-end hardware. The membrane mode in particular runs 80 draw calls per particle at full opacity in additive blending, which is not nothing. My next steps are profiling this properly and figuring out whether the p5 web editor deployment is going to survive it. I’m cautiously optimistic but I’ve been cautiously optimistic before.

I faced many challenges throughout this project. I will list a couple below.

- The trails of the particles

- The explosion not being strong enough

- The behavior of pulling particles

- Performance issues

- The behavior of particles explosion

- The Texture of galaxy (scrapped idea)

and honestly I could go on for days.

The thing that worked the best for me is that I started very early and made a lot of progress early so I had time to play around with ideas. Like the galaxy texture idea for example from the last post, I had time to implement it and also scrap it because of performance issues. I also tried to write some shader code but honestly that went horribly and I didn’t want to learn all of that because the risk margin was high. Say I did learn it and spend days trying to perfect it and end up scrapping the idea. I also didn’t want to generate the whole shaders thing with AI, I actually wanted to at least undestand whats going on.

The most prominent issue was how the prints were going to look like as I didn’t know how to beautifully integrate trails as they looked very odd. I played around with the transparency of the background with many values until I got the sweet spot. My initial 3 modes were attract, condense, and explode but that wouldn’t be conveyed well with the prints so I switched to the modes we have right now.

Reflection

Honestly the user experience is in a better place than I expected it to be at this stage. The core loop, hold to charge, release to detonate, turned out to be one of those interactions that people understand immediately without any instructions, bur I can’t say the same about pressing Enter and Space to toggle around between modes haha. I’ve watched a few people pick it up cold and within thirty seconds they’re already testing how long they can hold before releasing. That’s a good sign. When an interaction teaches itself that quickly, you’ve probably found something worth keeping.

The three modes add a layer of depth that I wasn’t sure would land. Galaxy mode feels the most coherent because the visual logic is obvious: particles orbit a center, a halo breathes outward, the whole thing rotates slowly. Membrane mode is more abstract and I think some people will find it confusing on first contact. The blob emerging from particle convergence reads as intentional once you’ve seen it a few times, but the first time it happens it might just look like a bug. That’s a documentation problem as much as a design problem. A very subtle UI hint, maybe a faint key label in the corner, might do enough work there without breaking the aesthetic.

The transition speeds feel right in galaxy and a little slow in membrane. When you press Enter to leave membrane mode, the blob takes long enough to dissolve that it starts feeling like lag rather than a designed dissolve. I want to tighten the MORPH_LERP value and see if a slightly faster exit reads better while keeping the entrance speed the same. Entering slow, leaving fast, might be the right rhythm for that mode.

Performance is the thing I’m least settled about. On my machine in VS Code the sketch runs clean. The membrane mode specifically concerns me because it runs one draw call per particle per frame in additive blending, and additive blending is expensive in ways that only become obvious at 600 particles so it’s a little bit slower there.

The one thing I genuinely would love to add is audio. Not full sound design, something minimal. A low frequency hum that rises in pitch as charge builds, a short percussive hit on release scaled to the explosion tier. The sketch is very silent right now and I think sound would close a gap in the experience that visuals alone can’t. The charge accumulation in particular has this tension that wants a corresponding audio texture.

The naming situation I mentioned at the start, Melancholic Bioluminescence sounding like a Spotify playlist, has not resolved itself. If anything the addition of galaxy mode and the membrane makes the name less accurate. The name now is GALACTIC

REFERENCES

p5.js Web Editor | 20260211-decoding-nature-w4-blob-example

Inigo Quilez :: computer graphics, maths, shaders, fractals, demoscene

Also yes, AI helped with a lot of the math again. The Keplerian orbital speed scaling, the seamless noise ring sampling, the proximity weighting in the blob. I understand what all of it does now though, which I count as a win. I use AI not do my work, but as a tool that helps me get to what I want as a mentor. I think I am very satisified with this output as I built the architecture, I build the algorithms, I designed everything beforehand and when things felt stuck I used AI as my mentor. I think the section where I used AI the most is filling in a lot of values to for things cus I couldn’t get values that felt nice. Here’s an example below.

class Particle {

constructor(x, y) {

this.pos = createVector(x, y);

this.vel = createVector(0, 0);

this.nox = random(10000);

this.noy = random(10000);

this.ns = random(0.0015, 0.004);

this.driftSpd = random(0.5, 1.2);

this.baseSize = random(1.8, 4);

this.size = this.baseSize;

this.baseHue = random(228, 288);

this.hue = this.baseHue;

this.sat = random(55, 85);

this.bri = random(75, 100);

this.alpha = random(40, 70);

this.maxAlpha = this.alpha;

this.life = 1;

this.dead = false;

this.wobAmp = random(0.3, 0.9);

this.wobFreq = random(2, 4.5);

this.orbSpd = random(0.015, 0.04) * (random() > 0.5 ? 1 : -1);

this.drag = random(0.93, 0.97);

this.explSpd = random(0.6, 1.4);

this.rotDrift = random(-0.35, 0.35);

this.absorbed = false;

this.trailTimer = 0;

this.suctionTrailTimer = 0;

this.behavior = BEHAVE_RADIAL;

this.spiralDir = random() > 0.5 ? 1 : -1;

this.spiralTight = random(0.03, 0.09);

this.boomerangTimer = 0;

this.boomerangPeak = random(0.3, 0.5);

this.flutterFreqX = random(5, 12);

this.flutterFreqY = random(5, 12);

this.flutterAmp = random(2, 6);

this.cometTrailRate = 0;

this.explodeOrigin = createVector(0, 0);

this.morphAngle = random(TWO_PI);

}