The Concept

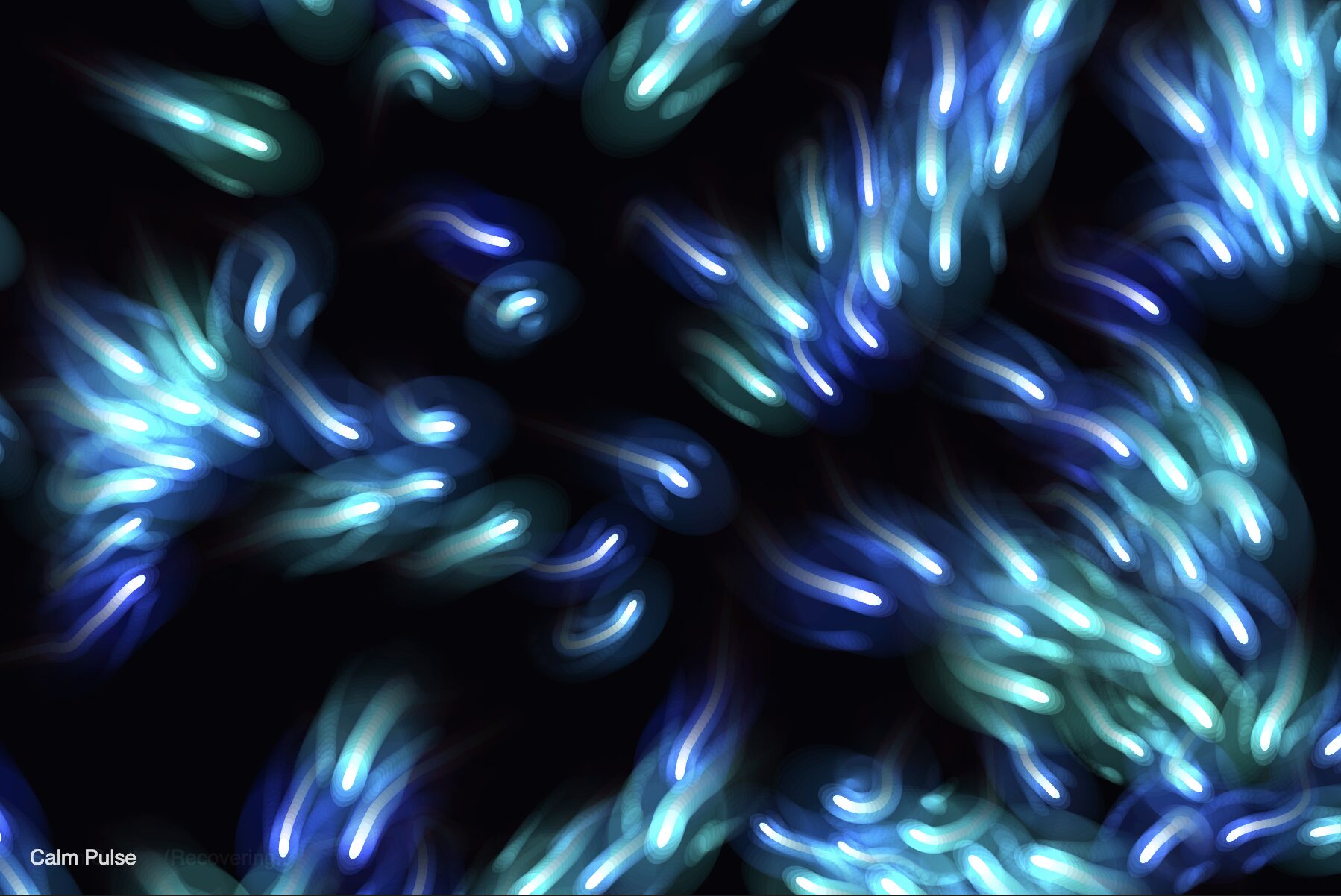

After exploring Memo Akten’s work, I got obsessed with how he uses mathematical functions to create these organic, almost living visuals. His pieces feel like they’re breathing, expanding and contracting in this hypnotic rhythm.

I wanted to create something that captures that same feeling using Simple Harmonic Motion. Instead of pendulums, I thought: what if I used the sine wave to control the size, position, and color of circles? Like watching something breathe or pulse to an invisible heartbeat.

The idea was to start with one breathing circle, then expand it into grids and layers, creating interference patterns that feel natural and meditative. Think of it like ripples in a pond, but frozen in time and space, constantly shifting.

The Physics Behind It

Simple Harmonic Motion shows up everywhere in nature – springs, sound waves, light waves, even the motion of atoms. At its core, it’s just the sine function:

position = amplitude × sin(frequency × time + phase)

Where:

- Amplitude controls how far it moves

- Frequency controls how fast it oscillates

- Phase offsets the starting point

The beautiful thing about sine waves is that when you combine multiple ones with different parameters, you get these complex, organic patterns. It’s the foundation of how we understand waves in general.

Building It Up: Milestones & Challenges

Milestone 1: Single Breathing Circle

I started with the most basic concept – a single circle that grows and shrinks using a sine wave. This was about getting the rhythm right and understanding how amplitude and frequency affect the motion.

Here’s Milestone 1:

This proved the concept – a circle that breathes in and out smoothly. The challenge was finding the right frequency. Too fast, and it looks jittery. Too slow and it’s boring. I settled on 0.02, which gives it that calm, meditative breathing pace.

Milestone 2: Grid of Oscillating Circles

Next, I wanted to fill the whole canvas with breathing circles. I created a grid where each circle’s phase is determined by its distance from the center, creating a ripple effect that propagates outward.

Here’s Milestone 2:

The wave propagates from the center outward! Each circle’s phase is determined by its distance from the center, creating this mesmerizing ripple effect. You can see waves of expansion and contraction flowing across the grid.

Milestone 3: Multi-Layer Concentric System

This is where it got really interesting. I went back to a single point but added multiple concentric layers, each oscillating at different frequencies. The code I’m most proud of is the layering system:

for (let layer = 0; layer < 3; layer++) {

let layerFreq = 0.02 + layer * 0.015;

let layerPhase = layer * TWO_PI / 3;

for (let i = numCircles - 1; i >= 0; i--) {

let phase = i * PI / 8 + layerPhase;

let size = (baseSize + i * 45) + amplitude * sin(time * layerFreq + phase);

// ... draw circle

}

}

By offsetting each layer’s phase by 120 degrees (TWO_PI / 3), they create this three-part harmony. When one layer is expanding, another is contracting, creating constant motion and depth.

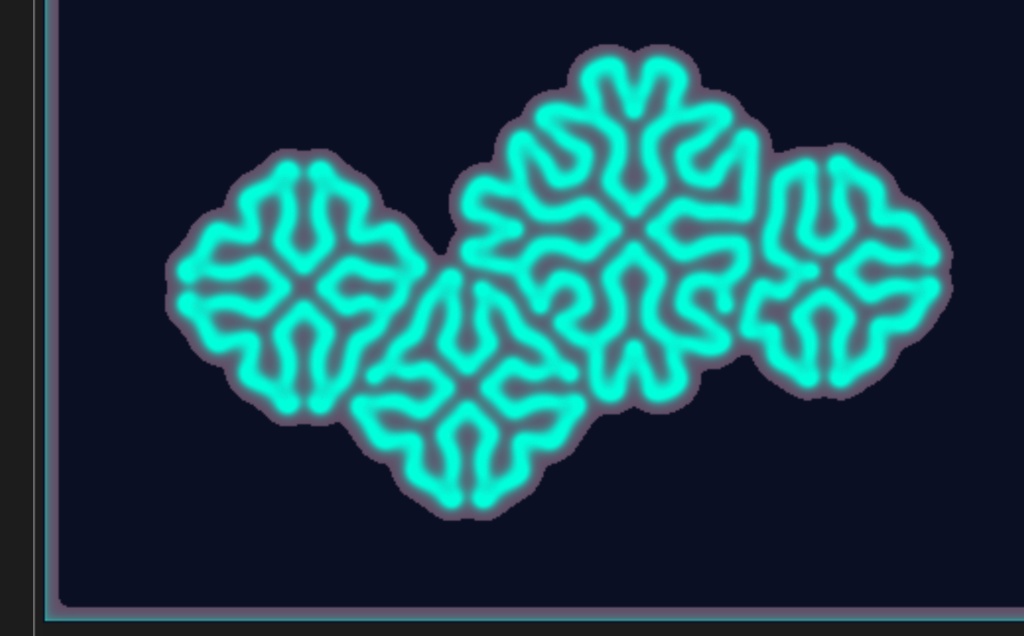

Here’s Milestone 3:

The three-layer system creates this incredible depth where you can see different rhythms happening simultaneously. It’s almost musical – like hearing three different instruments playing in harmony. The circles breathe in and out of sync, creating these beautiful interference patterns.

Milestone 4: Combining Grid + Multi-Layer (The Final Form)

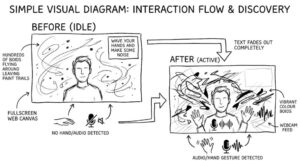

For the final version, I combined everything – the grid layout from Milestone 2 with the multi-layer system from Milestone 3. Each point on the grid now has its own concentric breathing system, and they all ripple together based on distance from the center.

This is where the magic happens. You get the propagating wave effect from the grid, but with the depth and complexity of the multi-layer system. It’s like watching a field of flowers breathing together in the wind.

Here’s the final version:

The final version creates this hypnotic field of breathing circles. Each cluster has its own internal rhythm (the three layers), but they’re all synchronized by the wave propagating from the center. Sometimes they all sync up for a moment, then slowly drift apart again into complex interference patterns.

I added keyboard controls to adjust the frequency in real-time so you can find your own favorite rhythm. Press ‘H’ to hide the UI for a cleaner view, and ‘S’ to save a frame.

Reflection & Future Work

This project really opened my eyes to how much beauty you can create with just the sine function. By layering multiple oscillations with different frequencies, phases, and amplitudes, you get these rich, complex patterns that feel alive and organic.

What I learned:

- The sine wave is amazing for creating organic motion

- Layering multiple frequencies creates visual richness and depth that a single oscillation can’t achieve

- Phase offsets are crucial – they prevent everything from syncing up and create that wave propagation effect

- Combining grid layouts with complex per-point systems creates the most interesting results

- Even simple mathematical rules can create patterns that feel natural and alive

What I’d add next:

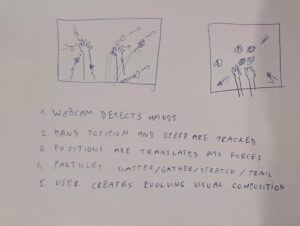

- Audio reactivity – make it respond to music, with frequencies mapped to sound frequencies

- 3D version – spheres breathing in 3D space with depth and perspective

- Mouse interaction – let users disturb the field and watch the waves respond

- Different grid patterns – hexagonal grids, Voronoi cells (I learned about this in parametric design lab class with prof Aya), or organic spacing

- Color schemes – different palettes for different mood s

- More control parameters – adjust layer count, circle count, amplitude separately

- Recording mode – export as video to create seamless loops (I bet this can viral on instagram reels)

The most hypnotic part is just letting it run and watching the patterns emerge. The waves flow across the grid, the layers breathe in and out of sync, and sometimes everything aligns for just a moment before drifting apart again. It’s meditative – I’ve caught myself just staring at it, watching the patterns shift and evolve.