Final Project Proposal: Boids, Audio, and Hand Gestures

Concept and Artistic Intention

For my final project, I want to build on my ninth assignment, Ephemeral Flocks, and turn it into something much more interactive. In that project, I worked with boids, which connects directly to the idea of autonomous agents from The Nature of Code.

Visually, it was really interesting to watch the flock respond to the webcam feed, it felt like they were “decoding” reality in their own way. But at the same time, the experience felt a bit passive. The user basically just clicked and watched, and I think that limited the potential of the piece.

This time, I want to bring the human back into the system in a more active way. The idea is for the user to feel like they’re conducting the flock, almost like an orchestra conductor. Instead of freezing the screen, the boids will constantly move over a live video feed, painting it with this messy, expressive texture inspired by Van Gogh or Studio Ghibli.

But the key difference is that now, the system will respond to the user’s body and voice, it will listen and react, not just exist.

Interaction Methodology

I’m planning to use two main types of input to control the behavior of the boids:

- Hand gestures (via ml5)

- Microphone input (via p5.sound)

The hand will act as a kind of steering force. Wherever the user moves their hand on the screen, it will create an attraction vector that pulls the flock toward that position. So you’re literally guiding them through space.

The microphone will control how chaotic the system becomes. If the user is quiet, the boids will behave more calmly, they’ll stay cohesive, aligned, and move smoothly as a group. But if the user makes noise (like clapping or speaking loudly), that volume will increase the separation force, causing the boids to scatter and behave more unpredictably.

So in a way, the user is constantly balancing control and chaos through movement and sound. This directly ties back to the forces and vector systems we’ve been working with in class, but turns them into something you can physically feel and experiment with.

Initial p5.js Sketch [Please Open Web Editor, give webcam permissions and wave your hand]

Design of the Canvas

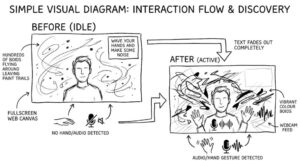

I want the visual experience to feel minimal and immersive, almost like an installation rather than a typical interactive app. That means no buttons, no heavy UI,just the system itself.

Layout (rough idea):

[Image generated using gemini]

- A fullscreen web canvas

- The live webcam feed sits in the background

- Hundreds of boids move across the screen, leaving painterly trails over the video

- In the top-right corner, there’s a small line of text:

“wave your hands and make some noise”

Once the system detects movement or sound, that text fades away so it doesn’t distract from the visuals.

The important part is that all control comes from the user’s body and voice. There are no sliders or settings, just interaction. The goal is for users to slowly discover how their gestures and sounds shape the digital painting over time, without being explicitly told how it works.