Ephemeral Flocks: Painting with Boids and Live Video

Concept & Inspiration

For this project, I wanted to explore the intersection of organic, emergent systems and digital surveillance/capture. The concept revolves around using a simulated flocking system (boids) not just as moving entities, but as autonomous painters that “decode” and reconstruct reality.

The sketch operates in three distinct phases, creating a natural cycle of tension and release:

-

The Live Feed (Reality): The user sees a standard, real-time webcam feed.

-

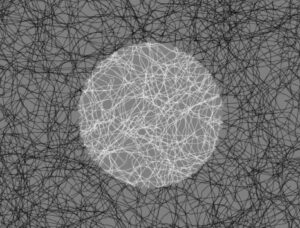

The Freeze & Draw (Tension/Emergence): Upon clicking, time stops. A snapshot is captured, and suddenly hundreds of boids swarm the canvas. Instead of clearing the background, they leave continuous trails, acting as a generative brush. They read the brightness of the frozen pixels beneath them, mapping the light and shadow of the captured moment through their chaotic flight paths.

-

The Dissolve (Release): After fifteen seconds of frantic drawing, the image slowly dissolves back into the live video feed, erasing the boids’ hard work and resetting the cycle.

Visually and conceptually, this was heavily inspired by the generative artwork of Ryoichi Kurokawa and Robert Hodgin, who both excel at blending chaotic particle systems with structured, recognizable forms, making the digital feel tactile and natural. The specific mechanic of using boids as a “brightness brush” was directly inspired by Valerio Viperino’s brilliant “Drawing with boids” experiment.

Code Highlight: The Autonomous Brush

The part of the code I am most proud of is within the Boid class’s show() method. Rather than telling the boids what to draw, I simply tell them how to see.

show() {

// Constrain coordinates to prevent array out-of-bounds errors

let px = constrain(floor(this.pos.x), 0, snap.width - 1);

let py = constrain(floor(this.pos.y), 0, snap.height - 1);

// Calculate 1D pixel array index

let index = (px + py * snap.width) * 4;

// Extract RGB and calculate rough brightness

let r = snap.pixels[index];

let g = snap.pixels[index + 1];

let b = snap.pixels[index + 2];

let brightness = (r + g + b) / 3;

// Draw the trail mapped to the pixel brightness

stroke(brightness, 150);

strokeWeight(1);

line(this.prevPos.x, this.prevPos.y, this.pos.x, this.pos.y);

}

This snippet is the bridge between the physical world (the camera pixel array) and the simulated world (the boids’ coordinates). By tying the stroke color to the underlying image brightness and lowering the opacity, the boids slowly layer their trails to create an etching-like quality.

Video Documentation :

Embedded Sketch [PLEASE OPEN IN WEB EDITOR AND GIVE WEBCAM PERMISSIONS]

Milestones & Challenges

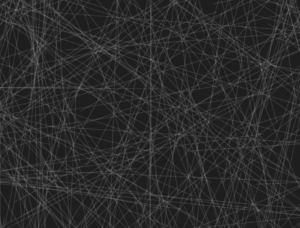

Milestone 1: Establishing the Trails Before integrating the camera, the first major hurdle was getting the boids to leave a continuous trail without the sketch crashing or looking like complete static. I had to modify the standard Craig Reynolds boid model to track.

Milestone 2: Reading the Environment The next challenge was getting the boids to “read” data. Before complicating things with a live video feed, I created a hidden canvas with a basic geometric shape. I programmed the boids to change their stroke color based on whether they were flying over the shape or the background. This confirmed the pixel-array math was working.

Challenge: Managing States Integrating the webcam introduced a massive flow challenge. I had to implement a state machine (LIVE, DRAWING, FADING) utilizing millis() to handle the timing. Ensuring the snapshot (snap.get()) only triggered exactly when the state shifted was tricky but crucial for performance.

Reflection & Future Work

This project pushed me to think about interactive media not just as tools that react instantly to a user, but as living systems that take time to develop. The 15-second drawing phase forces the user to pause and watch the algorithm work, highlighting the beauty of creative coding.

For future iterations, I would love to experiment with color data instead of just brightness, perhaps mapping the RGB values to the boids’ strokes to create a pointillist, impressionist painting. Additionally, mapping the flocking variables (like separation or speed) to audio input could make the drawing process even more dynamic and expressive.