Project Overview

This project explores a single question: can a particle system feel less like simulation and more like atmosphere?

I designed a generative system that behaves like a living cloud of cosmic dust. Instead of producing one fixed composition, the sketch continuously evolves and can be steered into different “cosmic events” through three operating modes:

- NURSERY — diffuse, drifting birth-fields of matter

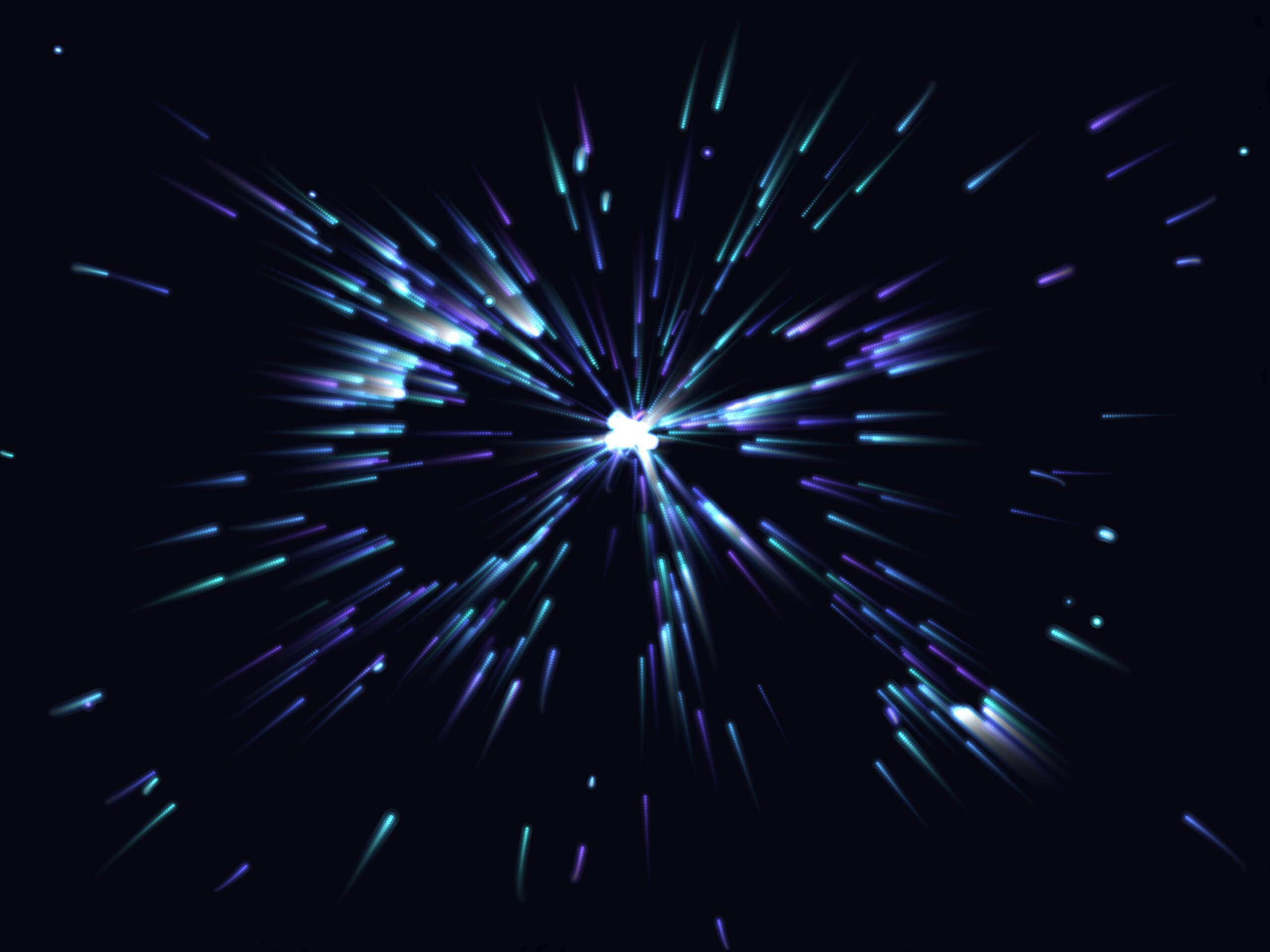

- SINGULARITY — gravitational collapse toward a center

- SUPERNOVA — violent outward blast and shock-like motion

The visual goal was to create images that feel photographic and painterly at the same time: soft glows, suspended particles, and dynamic trails that imply depth.

Core Concept

The concept is inspired by astronomical imagery, but translated into a procedural language:

- Birth (diffusion)

- Attraction (collapse)

- Explosion (release)

These are treated not only as physical states but as aesthetic states. The same particle population is reinterpreted through changing force fields and trail accumulation over time.

I was particularly interested in the tension between control (known forces, reproducible logic), and emergence (unexpected compositions and visual accidents).

This is what makes the output truly generative: the system is authored, but each frame remains open-ended.

What Changed from the Starting Sketch

The starting version established the core particle loop and 3 state controls.

However, it was still a prototype:

- Only a single rendering style (point strokes)

- Low-resolution/off-target export buffer

- Background tint drift issues over time

- No dedicated print workflow

- No color interaction controls

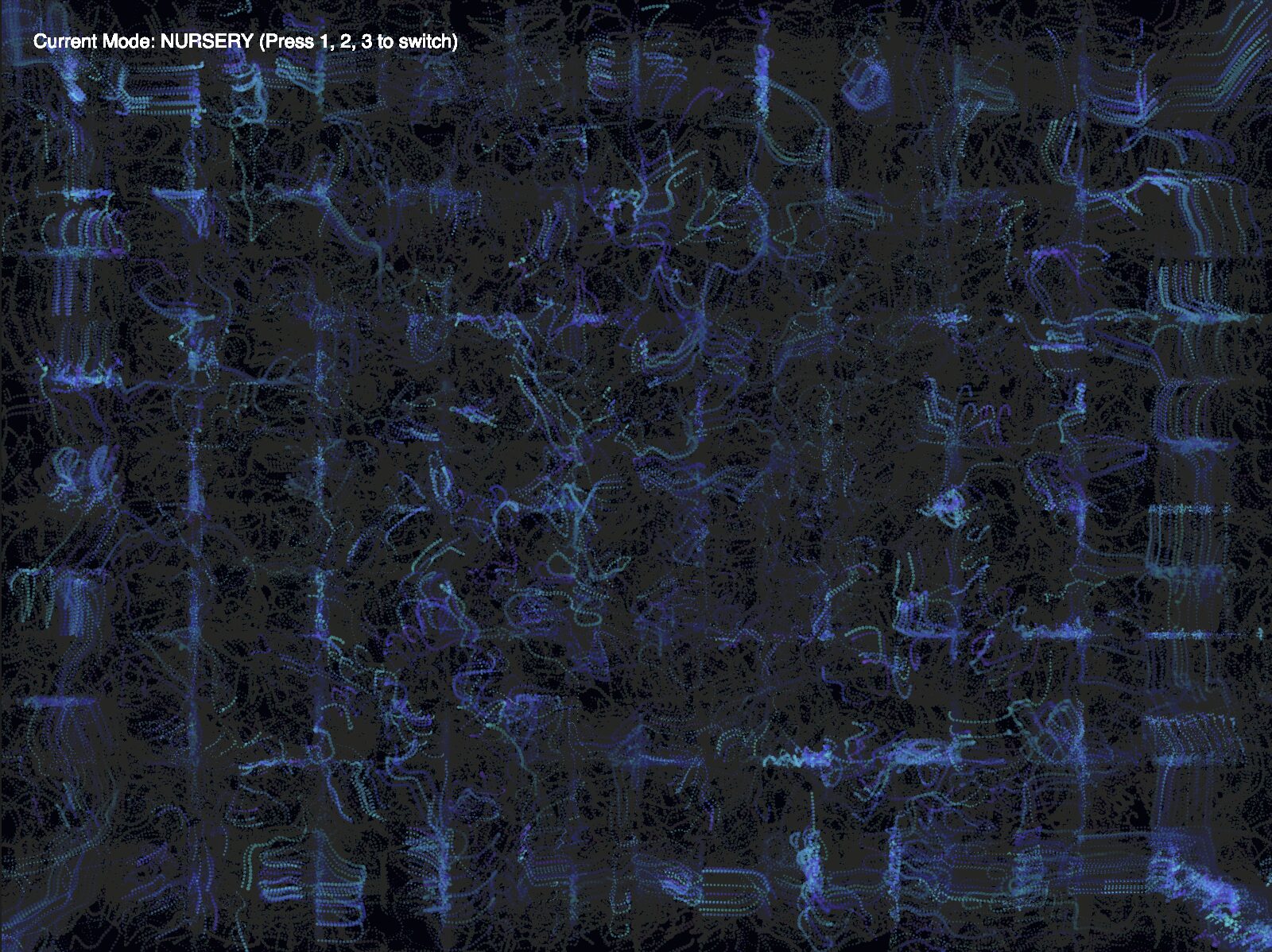

The sketch went through a series of transformations and visual changes as I experimented with different styles and debugged:

Here, I tried to play with the visual style of the dust and also tried to make them move uniformly over the sketch in the Nursery mode using grid coordinates, only allowing a few particles in each grid. But as you can see, that led to some unexpected behavior where the particles repeatedly jumped between grid boxes, giving the impression of being clung to the edges of the grid, showing the grid pattern. I scrapped this idea altogether.

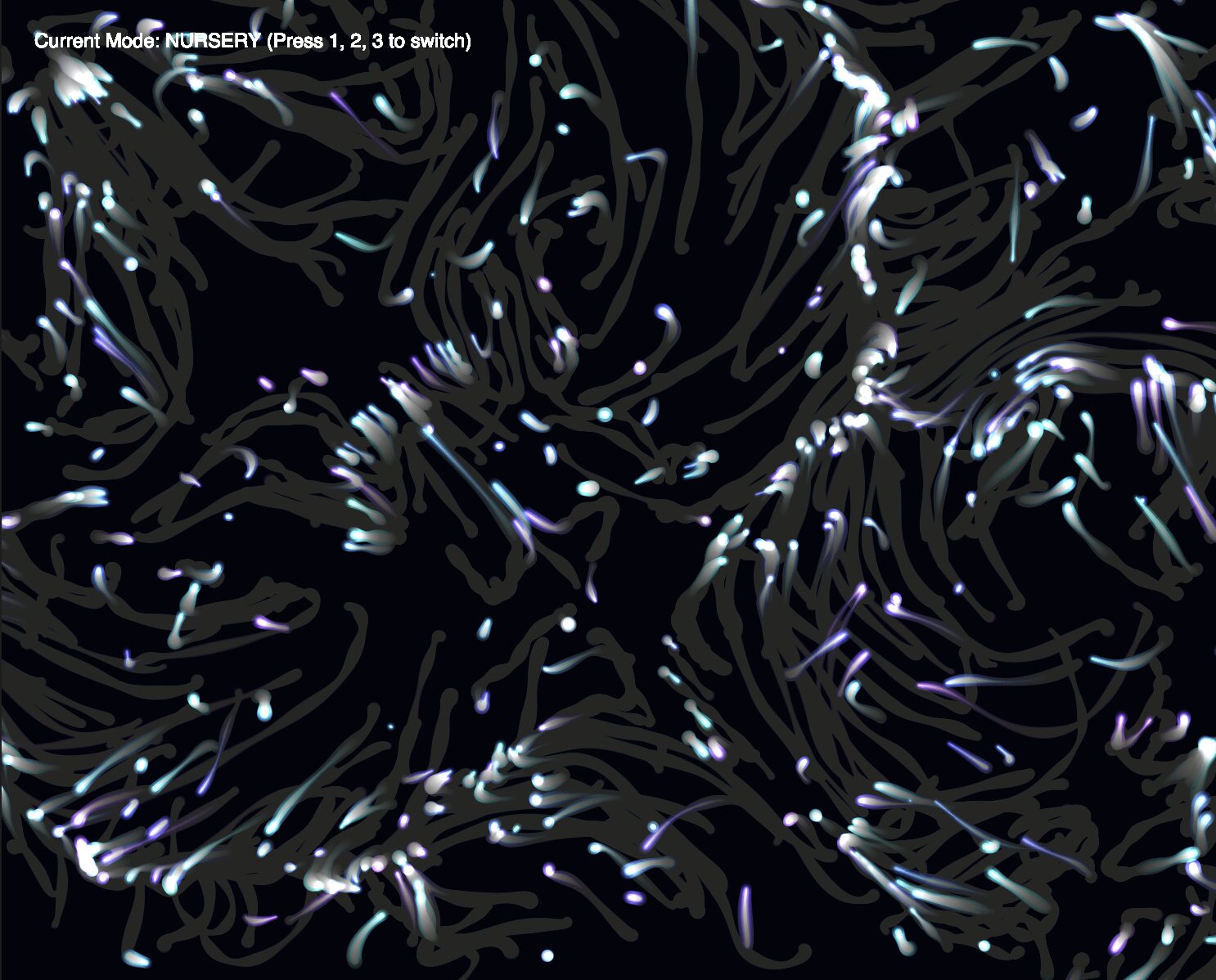

This is where I started to get the particles’ look right. I added halos around the particles for visual appeal. There was still, however, the issue of the trails permanently discoloring the background canvas, and so I looked into fixing that. The end result after some tweaks is what you see in the final sketch.

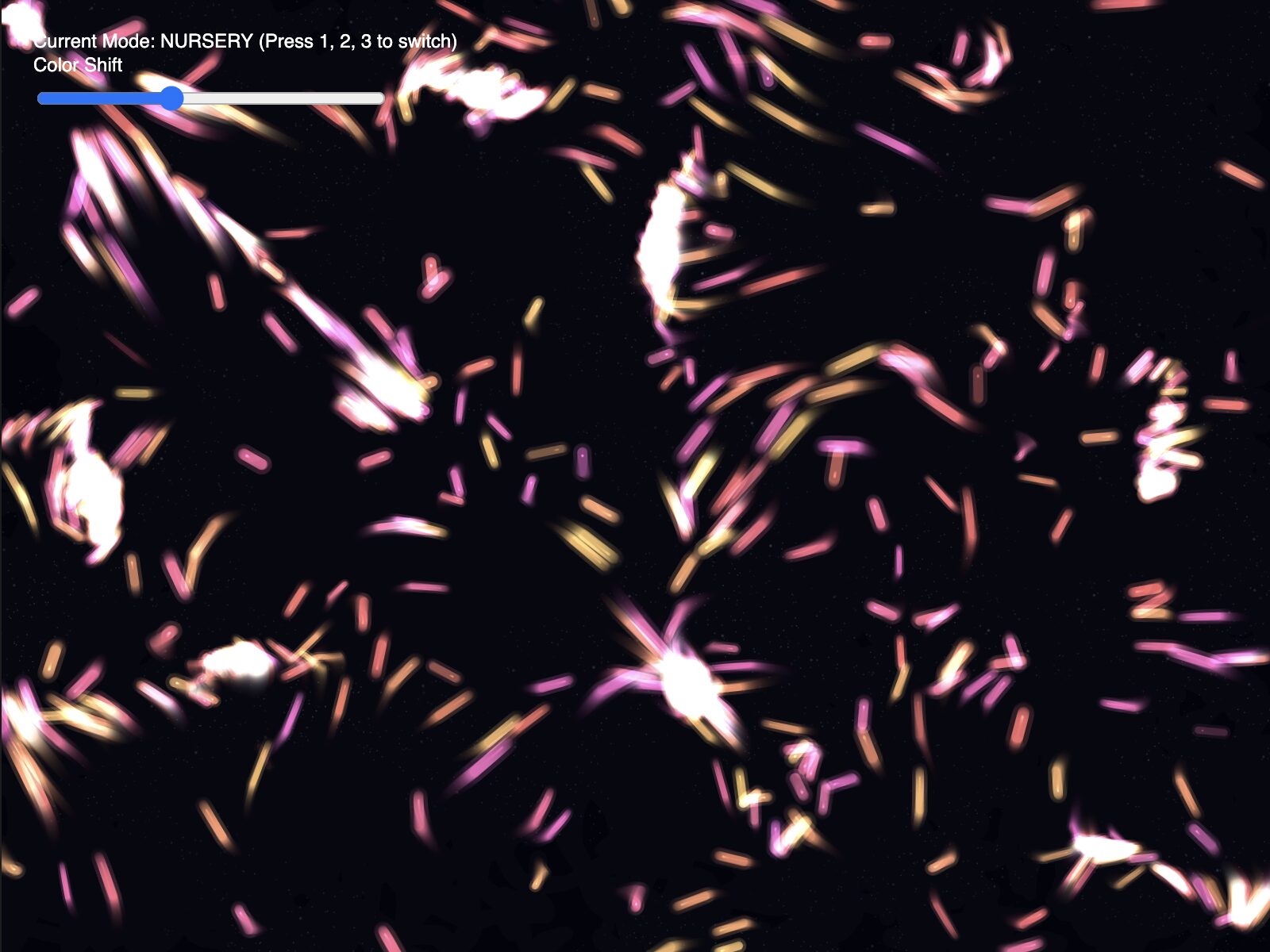

I did experiment a bit further, tweaking parameters to see if I could stumble upon something interesting. Here is an example of a variant I made this way:

Even though this looked striking, it wasn’t true to the vision of dust I set out with, so I settled with the final look.

The final midterm version is substantially expanded from the initial sketch:

A) Rendering pipeline upgrade

– Introduced layered rendering via `trailLayer` (screen) and `trailLayerHR` (high-res memory).

– Trails are preserved and faded over time independently of the base background.

– This allows longer atmospheric strokes without permanently destroying background consistency, as was happening before in the first iteration.

B) Visual quality upgrade

– Multi-pass glow rendering per particle (halo + mid + core).

– More controlled velocity damping and motion smoothing.

– Larger and more visible dust behavior for stronger material presence.

C) Interaction + composition upgrade

– Added a Color Shift slider (`0–360`) to rotate the palette across HSB space.

– Default keeps the original blue/purple range; slider supports warm/yellow/red variants for alternate print moods.

D) Output and print-readiness upgrade

– Export target standardized at 4800 × 3600.

– Save trigger implemented on S key.

– Timestamped filenames generated for iteration tracking.

Technical Implementation

4.1 Particle engine

The sketch uses a classic particle architecture:

- `Dust` class with:

- `pos`, `vel`, `acc`

- `lifespan`

- per-particle hue and size attributes

- `applyForce()` for composable behavior

- state-based force fields applied every frame

This combines core programming techniques from class:

1. Particle systems (object lifecycle + continual spawn/death)

2. Vector-based force simulation (`p5.Vector`, gravity/repulsion/drag)

It also uses:

– oscillation (`sin`) for shimmer dynamics,

– state machine logic for mode switching,

– off-screen rendering buffers for high-resolution output.

4.2 State logic

The state variable controls force behavior:

– NURSERY: noise-like drifting flow

– SINGULARITY: attraction toward cursor

– SUPERNOVA: repulsion blast from cursor zone

This keeps one core system while producing multiple visual outcomes from the same underlying model.

4.3 Trail architecture and background consistency

A key challenge was preserving a stable background while retaining long-lived trails.

Solution:

– render base color to main canvas each frame,

– draw particles to transparent trail layers,

– fade trails by reducing alpha over time,

– composite trail layer over base.

This decouples “world background” from “particle residue,” preventing muddy drift and preserving consistent color identity.

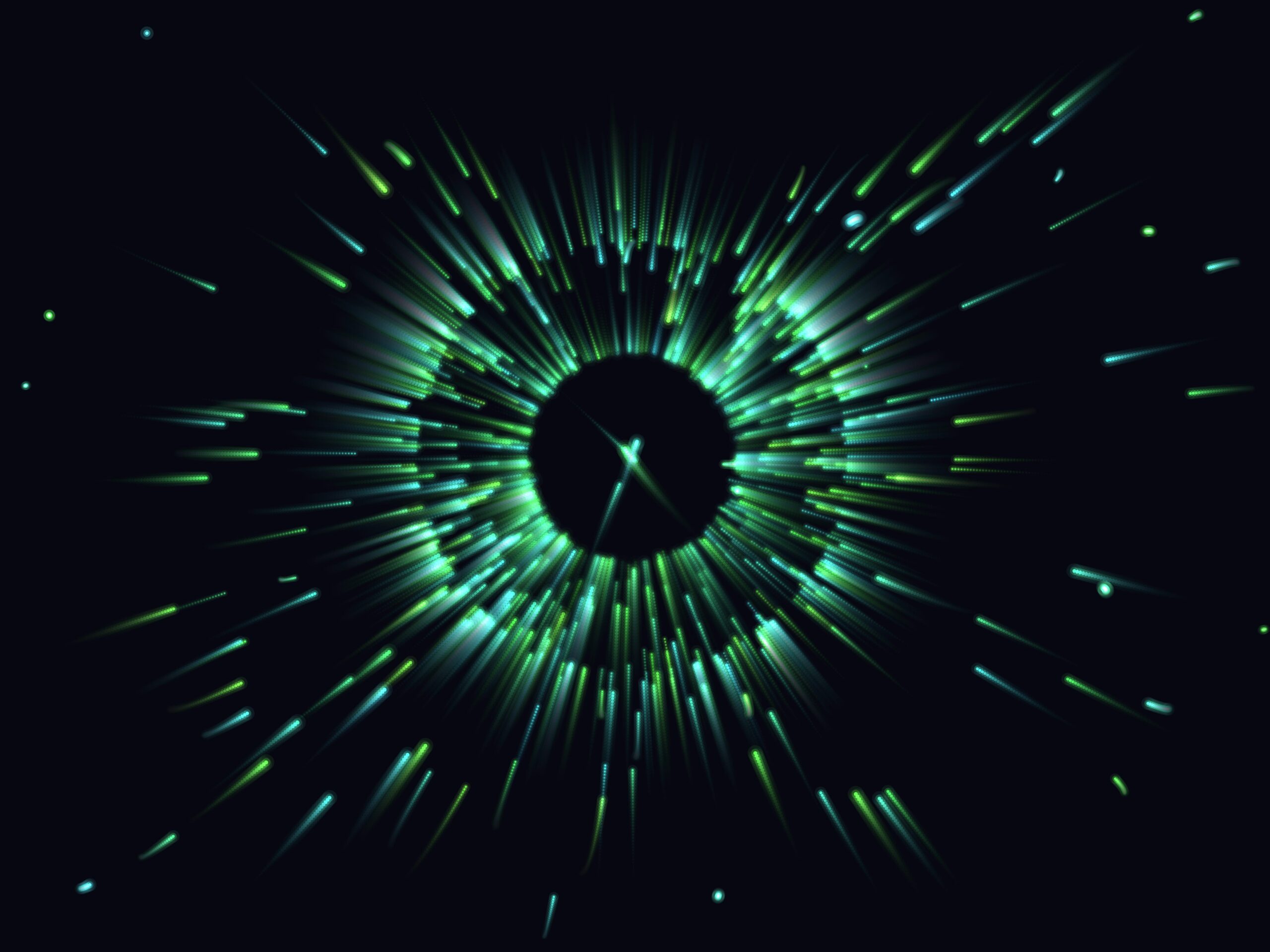

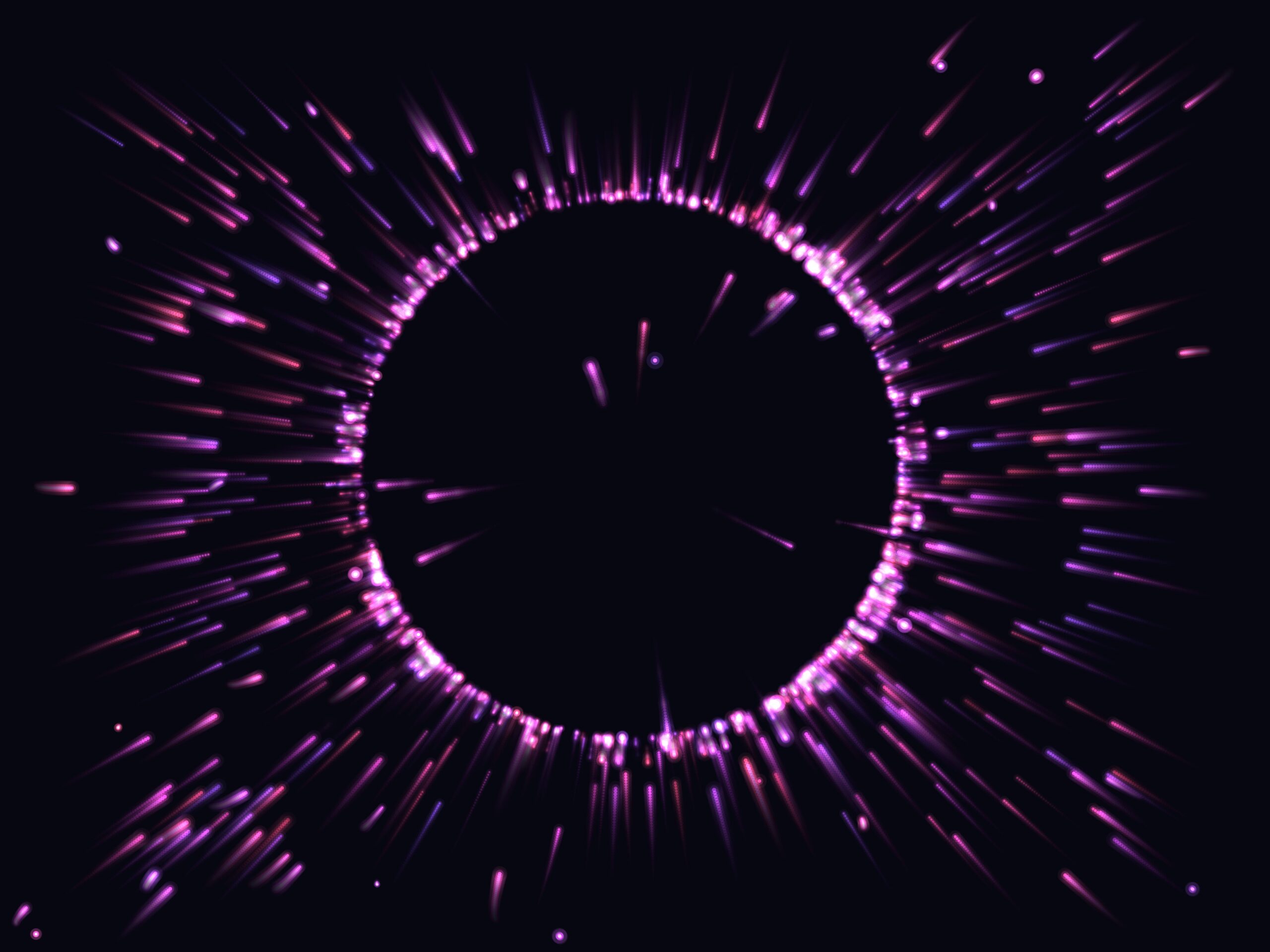

Final Outputs

A major shift in mindset was treating the sketch not just as a visual toy, but as a capture instrument for composition.

I began composing moments intentionally:

– waiting for dense flow regions,

– steering with cursor in singularity/supernova moments,

– selecting color shift and temporal build-up before capture.

This made each final export less like a screenshot and more like a harvested frame from a living system.

I particularly like the third one, which reminds me of the film Arrival (2016), where extraterrestrials use circular logograms like the one depicted in the third output to communicate visually. Try to see if you can achieve this in the sketch!

Video Documentation

Reflection

This project taught me that strong generative outcomes come from balancing three layers:

- Physics logic (how particles move)

- Render logic (how particles appear)

- Capture logic (how moments are preserved).

The starting sketch had the first layer; the final midterm became successful only after all three were integrated.

What worked best:

- Robust mode switching with meaningful visual differences

- Stable background + persistent trails

- High-resolution pipeline aligned with print requirements.

What I would improve next:

- Richer mode-specific rendering signatures (even stronger distinction per mode)

- Static star-dust depth field layer

References / Inspirations

- p5.js Reference — https://p5js.org/reference/

- p5.js `createGraphics()` documentation

- Astrophotography color references (nebula palettes)

- Class lecture notes on particle systems, vectors, and oscillation

AI Disclosure

AI assistance was used during development for debugging rendering behavior, refining export strategy, and structuring documentation. All concept direction, aesthetic decisions, interaction design, and final selection of outputs were authored and curated myself.