The Concept

So after doing the F1 attractor simulation in assignment 3, I kept thinking about it. It worked, it looked cool, but something about it always felt a bit… mechanical. The car was basically just getting yanked from point to point by invisible gravity wells. There was no real intelligence there, it was just physics doing all the work.

When we started going through the steering behaviors in class, path following, separation, the flocking stuff, I immediately thought: this is how I can rebuild that track properly. Not with attractors pulling a car around, but with a car that actually decides where to go, that reads the path and steers toward it, and knows to stay away from other cars around it. That difference matters to me. One feels like a simulation, the other feels like behavior.

So the idea for this assignment was to reimplement my F1 track from assignment 3 but change the physics underneath. Instead of attractors, I define an actual closed Path using the same centerline points. Then I put five cars on it, each with their own random top speed, and let them figure it out. Path following gets them around the track, separation keeps them from piling up on each other.

The thing I really wanted to see was whether giving each car a slightly different speed would naturally create that staggered grid effect you see in real racing where fast cars pull away and slow ones fall behind. It absolutely does and I love how it turned out.

The Physics Behind It

The two behaviors powering everything here come straight from what we did in class.

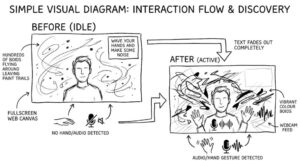

Path Following works by looking ahead each car projects a “future position” 30px in front of itself based on its current velocity. It then finds the closest point on the path (the normal point) to that future position. If the future position has drifted more than the path radius away from the centerline, the car steers toward a target 25px ahead on that segment. If it’s still within the band, it does nothing and just keeps going.

Separation is the same weighted-distance pattern from the flocking exercise. Each car checks all neighbors within a 55px radius, and for each one it builds a repulsion vector pointing away, scaled by 1/d so closer cars get pushed harder. That sum gets turned into a steering force. I weighted separation at 1.8× and path following at 1.0×, so when two cars are about to collide, staying apart wins over staying on the line. In practice this means they’ll briefly drift wide in a corner to avoid each other, then snap back which honestly looks exactly like real racing.

Each car also gets a random top speed assigned at setup, between 4.0 and 7.0 units per frame. That’s the one thing I really wanted to explore and it gives the whole thing this organic feel where cars naturally pull away from each other over time instead of bunching up into a permanent traffic jam.

Building It Up: Milestones & Challenges

Milestone 1: One Car, One Path

I started simple, just get a single car to follow the path before worrying about anything else. I defined the Path class using the same centerline coordinates I had mapped out in assignment 3, set the radius to 15px so there’s a comfortable band to work in, and got one car steering around the track.

Here’s Milestone 1:

This was actually more annoying to get right than I expected. The path is closed, which means I’m looping through all segments including the one that wraps from the last point back to the first. For a while I forgot to do (i + 1) % pts.length on the segment index so the car would follow the track fine for 12 segments and then just fly off the canvas when it hit the end. Once I fixed the wraparound it went smoothly.

I also had to think about what happens when the normal point falls outside a segment like when the car is near a corner and the normal projects past the endpoint. I clamped it using a dot product check, same way the vehicle path sketch from class handles it. Once the clamping was in, corners became much smoother.

Milestone 2: Adding the Other Four Cars

Once one car worked I duplicated it out to five, gave each one a random speed, and staggered their starting positions around the track so they wouldn’t spawn on top of each other. I used five positions that are roughly equally spaced top of the track, two in the straight sections, and two in the lower curves.

The first run with five cars immediately showed me the separation wasn’t strong enough. They’d follow the path totally fine individually but the moment two of them got close they’d just kind of phase through each other because the separation force wasn’t overriding the path force. I bumped the separation weight from 1.0 to 1.8 and that was enough; they now visibly push each other apart without losing the track completely.

Challenge: Minimum Speed vs. Separation Pushing Cars Off Track

The hardest thing to balance was what happens when separation is pushing a car sideways and the car’s velocity drops below the minimum threshold at the same time. My minimum speed enforcement was vel.setMag(topSpeed * 0.45) which always points the velocity in the direction it’s already going — but if separation had rotated that direction sideways or slightly off-track, locking in the minimum speed in that direction would send the car drifting into the infield.

The fix was ordering things correctly: I apply all behavior forces first, then let vel.add(acc) happen naturally, then apply vel.limit(topSpeed) for the max, then the minimum check. That way the minimum speed respects whatever combined steering direction the car has already settled on for that frame, rather than fighting the other forces. Once I got the order right the cars stopped randomly deciding to drive through the grass.

Milestone 3: Colored Cars and Trail Polish

Once the behavior was solid I went back to visuals. I gave each car its own color based on real F1 team colors: Ferrari red, Williams blue, McLaren orange, Aston green, a purple one. The trail draws with per-car color and fades using the same opacity and stroke weight gradient from assignment 3.

I also added a showPath toggle on the P key so you can see the path band overlaid on the track. This was really just for debugging but I kept it in because it’s actually interesting to see how the path sits right on the centerline and how the cars drift around within the band.

The Final Result

Five cars, each with a different top speed, following a closed path around the same F1 track from assignment 3. Faster cars pull ahead and lap slower ones. When two cars get close their separation forces kick in and they move apart like they’re actually racing side by side instead of overlapping.

Controls:

- P — toggle path debug overlay

- R — reset cars with new random speeds

- S — save frame

Reflection & Future Work

What I find genuinely interesting about this compared to assignment 3 is how different the result feels even though the track looks identical. The attractor version felt deterministic, the car went where the physics sent it. This version feels like the cars have preferences. They want to stay on the path but they also want space. Watching two cars negotiate a corner together and one briefly drifts wide to give the other room is really satisfying.

What I learned:

- Path following with look-ahead handles closed loops way more gracefully than I thought it would. The math is simple but it generalizes well.

- Steering behavior weights matter a lot, changing separation from 1.0 to 1.8 was the difference between cars clipping through each other and cars that actually race properly.

- Force application order is not trivial. You have to think about what each operation is doing to the velocity vector before the next one sees it.

- The same visual output can feel completely different depending on the system underneath it.

What I’d add next:

- Lap times: record how long each car takes per lap and display a live leaderboard

- Slipstream effect: if you’re directly behind a car you should go slightly faster (draft effect)

- Pit stops: a car exits the path, slows down in a pit lane area, then re-enters at a fresh speed